Virtualization in DC vs AWS

Without getting into the larger debate of private cloud vs public cloud for the organization as a whole, this document tries to give the numbers that let us make a fair comparison in terms of performance - and performance alone between AWS and Private Cloud through Openstack.

The initial benchmarking and preparing these numbers stemmed out of the proposed DC migration to a west coast co-located DC at my current employer. The migration did not happen, but it gave us the opportunity to study the performance impact of having our own cloud.

One of the gripes that I've had about our colo infra is that we never seem to have gotten grade-A processors. Almost all of the ones were hand-me-down servers or mid-level Intel Xeons, sometime with absurdly low clock speed. The storage side wasn't very brilliant either.

This was solved when we obtained a test bed from Dell with the latest and greatest hardware in their lab that we could setup and run benchmarks on.

The machine we got was this:

The machine we got was this:

Hosted hardware in the environment consists of: PowerEdge R740XD (Quantity: 1) (2) Intel Gold 6136 3.0GHz 12C processors 384 GB RAM (12x 32GB configuration) Dell PERC H740P controller (6) 800GB SAS SSDs (RAID10 configuration) Mellanox 2 port 25GbE ConnectX-4 adapter Dell S5048 25 GbE 48-port switch Dell S3048 1GbE management switch (out-of-band management)

Note that this was not the top of the line Platinum processors coupled with dedicated chip for syscalls (nitro, in AWS parlance) that AWS had. But this represents a decent compromise between cost and performance and more importantly, this was something we could hope to replicate in our DC without having to trade an arm and a leg (of the company).

We decided to pick the latest and greatest in the AWS arsenal - a c5.4xlarge with a 100G EBS for pitting it against our VM which had the same configuration for CPU, Memory and Disk.

The VM was setup with Pass through CPU mode - meaning all the flags on the host CPU were exposed to the VM.

The VM was setup with Pass through CPU mode - meaning all the flags on the host CPU were exposed to the VM.

What to compare

Once we've gotten the hardware, we needed to decide on what exactly we wanted to compare with AWS. We knew already that we had no hope of competing with them in terms of storage. We also knew we can match AWS in terms of network performance because we had gotten SRIOV working - which guarantees bare-metal performance to VM NICs. We ultimately decided on these:

- Raw CPU performance - Compute

- Memory performance - Compute

- Mixed Compute performance - Compilation tests

- Raw Storage benchmarks

- SQlite benchmarks - Real world approximation for storage intensive tasks

Care was taken to match OS, software and library versions as much as possible. We also accounted for the fact that we were only VM running on the host by dynamically disabling the extra cores on the host - meaning only thing that would come into play is the Virtualization overhead (or so we hope).

The host CPU governor was left in PowerSave mode.

The host CPU governor was left in PowerSave mode.

The Raw compute benchmarks were also later replicated on the host that was running 3 VMs of 16 cores each, with the tests running on all of them simultaneously.

Below we have the real numbers that we obtained out of these tests, and we also discuss what these numbers may imply. We are presenting a subset of all the benchmarks that we did.

The tests were run using Phoronix test suite, and direct commands in case of OpenSSL.

Raw CPU performance

Single core ssl signing

| VM in Dell HW | AWS c5.4xlarge |

| sign verify sign/s verify/s | sign verify sign/s verify/s |

| rsa 2048 bits 1763.6 61026.7 | rsa 2048 bits 1613.4 54149.8 |

| Considering 2048 bit key 8.9% faster | |

Implications:

Considering the CPU models that we see inside the AWS instances this is a bit suprising that our Gold CPU can be faster. It means that either the hypervisor of AWS doesn't allocate as much CPU shares to each vCPU as the underlying CPU can provide or 2) AWS platinum processors are decidedly high-core count but lower performance than generally available top-end Xeon chips.

This is where the ugly face of what AWS calls Elastic compute unit comes into play - what you get as a core is actually defined as x number of ECUs rather than as cores on the CPU directly.

Considering the CPU models that we see inside the AWS instances this is a bit suprising that our Gold CPU can be faster. It means that either the hypervisor of AWS doesn't allocate as much CPU shares to each vCPU as the underlying CPU can provide or 2) AWS platinum processors are decidedly high-core count but lower performance than generally available top-end Xeon chips.

This is where the ugly face of what AWS calls Elastic compute unit comes into play - what you get as a core is actually defined as x number of ECUs rather than as cores on the CPU directly.

Multi core ssl signing

| VM in Dell HW | AWS c5.4xlarge |

| sign verify sign/s verify/s | sign verify sign/s verify/s |

| rsa 2048 bits 0.000036s 0.000001s 27984.2 959951.4 | rsa 2048 bits 0.000077s 0.000002s 12994.5 437587.3 |

| Considering 2048 bit key 109% faster | |

Implications:

Multi-core turbo scaling of commodity Xeon Golds is much better than in AWS where it is probably uneven / turned off to help with fitting into the ECU definition for each core - meaning the lion's share of power saving / performance improvement out of dynamic clock speeds goes to AWS.

One interesting tid-bit to mention here is that the Turbo Scaling that Intel advertises does not happen equally for all processor cores. It sort of linearly drops as the core count increases.

Check this graph out.

Multi-core turbo scaling of commodity Xeon Golds is much better than in AWS where it is probably uneven / turned off to help with fitting into the ECU definition for each core - meaning the lion's share of power saving / performance improvement out of dynamic clock speeds goes to AWS.

One interesting tid-bit to mention here is that the Turbo Scaling that Intel advertises does not happen equally for all processor cores. It sort of linearly drops as the core count increases.

Check this graph out.

Memory

Redis benchmarking

This can be sort of a composite memory test with CPU being involved a fair bit.

| VM in Dell HW | AWS c5.4xlarge |

| Average: 1692634.75 Requests Per Second | Average: 1638467.50 Requests Per Second |

| 22% faster | |

Implications:

We believe that this is due to the spill-over effect of AWS CPU have lower raw compute performance.

We believe that this is due to the spill-over effect of AWS CPU have lower raw compute performance.

Composite Performance

Linux Kernel Compile test

| VM in Dell HW | AWS c5.4xlarge |

| Average: 60.84 Seconds | Average: 99.57 Seconds |

| 63% faster | |

Implications:

This is again a CPU intensive test, and the previous results percolate through.

This is again a CPU intensive test, and the previous results percolate through.

Storage:

Before putting the numbers down here, let me state that storage is complicated business. What is raw storage performance is not what you get in the real world, and things like OS cache, filesystem choice, fsync choice, RAID cache, optimization by Qcow make harder to arrive at an apples to apples comparison.

So I am going to just paste the raw results of the entire test suite we ran for the Storage benchmarks below.

- AWS disk

- VM disk through QCOW

Note: The links are down at the moment. I will fix them as soon as I get the chance.

I am more than happy to discuss the specifics of the storage tests over a chat / meet.

Database

Sqlite

We could not run the full postgresql test as part of the Phoronix, but we settled for sqlite due to the time crunch. Yes, we know its not a real DB.

| VM in Dell HW | AWS c5.4xlarge |

| Average: 5.65 Seconds - RAIDed SAS - SSD | Average: 19.81 Seconds |

But these results are misleading

But here is an interesting thing,

| Dell baremetal | AWS c5.4xlarge |

| Average: 64.3825 seconds | Average: 19.81 Seconds |

| 325% slower | |

Further Discussions:

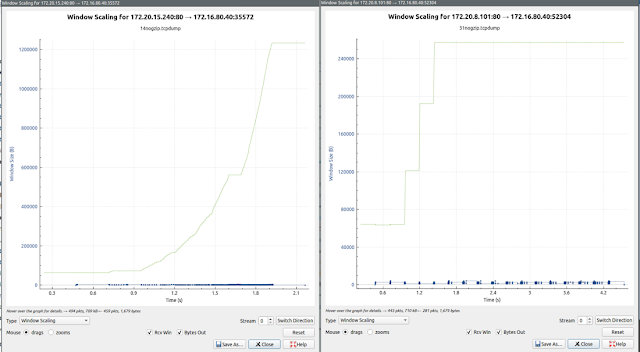

By default fsync is performed after every transaction in sqlite test. When measuring raw fsync performance inside our VM, we found that it can be upto a 100 times slower for each fysnc call.

By default fsync is performed after every transaction in sqlite test. When measuring raw fsync performance inside our VM, we found that it can be upto a 100 times slower for each fysnc call.

Check out the image below:

Which means the ridiculously low numbers that we were seeing with respect to Dell VM are probably due to a QCOW optimization.

Making sense of this involves a deep dive to the default KVM virtio storage cache mode, effects of OS cache, and effects of the RAID cache. Perhaps a separate write-up on this is in order.

But one thing we know for sure is that AWS has figured out how to drastically improve storage performance and that we cannot hope to match it unless we pay a storage vendor for a proprietary solution.

Final words:

Raw performance is not the only thing, not even the first thing that comes up as a consideration when deciding between private and public cloud. A cloud is much more than an infrastructure as a service platform. However, when choosing between the two, I believe when performance comes up, people usually lean on the public cloud side - this write-up hopes to clear things up (or muddy them further) in that aspect.

This was genuinely helpful and easy to follow. You have a way of explaining things without making readers feel overwhelmed.

ReplyDeleteThat clarity reminds me of how organized platforms like "https://winzoapp.net/" WinZO APP structure their information. A simple layout always improves the experience.