A Tale of Apache and Two Congestion Control Algorithms in Linux (With a guest appearance by Openstack and cURL)

Introduction:

The shortest intro would be these two cURL times - one for a bare metal server, and one for the Openstack VM - both running the same application, for a specific test URL.

Problem:

dnslookup: 0.109 | connect: 0.359 | appconnect: 0.000 | pretransfer: 0.359 | starttransfer: 0.624 | total: 6.739 | size: 710012

dnslookup: 0.016 | connect: 0.281 | appconnect: 0.000 | pretransfer: 0.281 | starttransfer: 0.531 | total: 6.958 | size: 710277

dnslookup: 0.000 | connect: 0.265 | appconnect: 0.000 | pretransfer: 0.265 | starttransfer: 0.530 | total: 5.351 | size: 709165

No problem:

dnslookup: 0.000 | connect: 0.265 | appconnect: 0.000 | pretransfer: 0.265 | starttransfer: 0.546 | total: 2.168 | size: 705631

dnslookup: 0.015 | connect: 0.281 | appconnect: 0.000 | pretransfer: 0.281 | starttransfer: 0.561 | total: 2.402 | size: 705354

dnslookup: 0.000 | connect: 0.265 | appconnect: 0.000 | pretransfer: 0.265 | starttransfer: 0.546 | total: 2.434 | size: 705485

For more details, read on.

Little background -

The above difference in the cURL times is seen between a VM inside KVM on Openstack and a bare metal server. At work we were contemplating moving to Openstack for some work loads and increase the density of our colo DC presence. However, the delay in response for the above test done through cURL meant we cannot go ahead with adding this VM to production. Since I was a major proponent of Openstack inside the Org, it fell upon me to demystify this bit.

ACT - I

Now, back to the main story. I had managed to obtain a similar new generation server with lots of cores and close to a terabyte of memory and about 12TB of storage. Here I had to give similar VMs to convince the Project Manager that he can get similar cost advantage in DC, while also improving the density of our DC presence. The VM was launched with a similar config to the bare metal they were serving out of. It was to run simple Apache server with PHP and had the fancy schmancy SRIOV NIC because I wanted to squeeze every little bit of performance I could, to bolster my case.

ACT - II

The team completed their sanity test on the new server, but were tickled by one oddity - One of the tests which essentially returned a lot of information in a simple HTML page took thrice as much time on the new VM. While I independently started on the list mentioned in ACT III, the team's SRE looked at it and figured that Apache spent just as time processing the request, but the times started diverging only during the actual transfer. He even found the exact PHP function call that wrote the data back and figured that was the only slow one. (I had eliminated disk and CPU by this time). But why was it slow inside the VM, but not in the bare metal? This was a real head-scratcher.

Additionally, the team had reported that the test was fine in the browser (same time it took in the bare metal server), but cURL showed the delays they were concerned about.

ACT - III

Now, I began listing out the possible causes.

* Network

* CPU - some weird syscall that was taking a lot of time inside the VM / clock issue

* Disk / IO issue - fsync inside KVM with LVM storage is hundreds of times slower than bare metal (this was before I had been informed that the request processing time was the same in both cases)

Let's talk about CPU first. The first thing that struck me was the test which basically was a page served by apache had parameter in the URL called time. AHA, this must be the little bugger, I thought. Why? This is why. Basically

gettimeofday, clock_gettime syscalls were 77% slower in AWS - the Package cloud blog post (here) is a very good write up of the issue. I enthusiastically ran the little program given in this page, congratulating my memory. Alas, that led me nowhere. I was not affected by this little implementation detail. The clock source in use was KVM and it did not take any inordinate amount of time longer than the bare metal. I verified this with a tiny program of my own.Next thing was to see if the disk was causing the issue. Note that even now I didn't really pay attention to the fact that apache finished processing the request in basically the same time. After a bit of work, I confirmed that disk was not touched at all for the processing of the request. I was growing desperate because, well what else was there that was screwing things up?

Interlude - Red herrings

Onwards to network then. I almost dismissed the network bit because well I had given the VM a SRIOV NIC, and it did not have the overhead of having to go through the host's network stack. Playing around, I hit the first red herring.

I had found that the test URL when hit by cURL by default resulted in a much larger page being transferred. Brainwave! Pass the gzip header to cURL: -H 'Accept-Encoding: gzip' and voila, there was no issue at all. It took the same time in the browser, curl and in both VM and bare metal. Alas, I rejoiced too soon.

The kind Sr. Engineer in the team pointed out that the same non-compressed page via cURL took less than half the time in the bare metal. Now the issue was more qualified - only when the output size was large (uncompressed) the delay was seen.

Second red herring was in the form of Network again. I had been given the URL to test and assumed that the page was served over a domain that resolved to a public IP. Wait a minute, I thought - what path does the VM take to the Internet? Sure enough, it was taking a different path and went out through a different, smaller link. (It is possible for incoming to a public IP and the outgoing path of the same public IP to be different.) Here, I declared this is the issue. I changed the path by altering routes on the host and expected the issue to disappear. Nope, it was still there. Because since the server was under testing, it actually resolved to a private IP, and the traffic flew over IPSEC tunnel from the office (from where we were testing) to the DC.

I was growing impatient by this time.

ACT -IV - Final Act

At this point, I fired up trusty old Wireshark after a packet capture with tcpdump.

Here is what I saw:

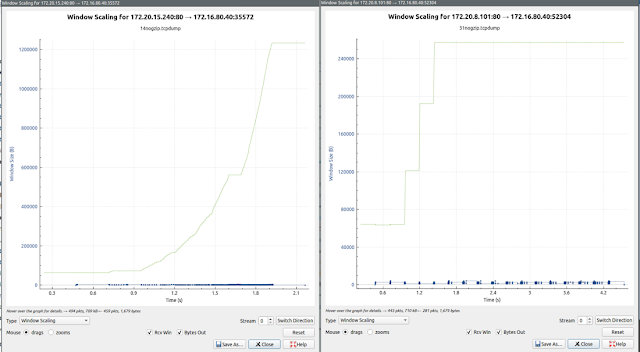

Leftmost is bare metal, right is the VM. X-Axis is 1200000 for bare metal, and about 240000 for the VM.

Now there is something, at last.

I did not get a chance to look at this further, due to other tasks from my own team. When I got back, I fired up sysctl.

sysctl -a | grep tcp

net.ipv4.tcp_congestion_

I got through to this from Google - https://www.uio.no/studier/emner/matnat/ifi/INF5072/v18/inf5072_example1.pdf

Figure 3 was all I needed.

I went back to the VM, changed the congestion algo and reran the cURL loop.

VM:

dnslookup:

0.002588 | connect: 0.254602 | appconnect: 0.000000 | pretransfer:

0.254696 | starttransfer: 0.510628 | total: 2.038362 | size: 775063

dnslookup:

0.002340 | connect: 0.255680 | appconnect: 0.000000 | pretransfer:

0.255761 | starttransfer: 0.511749 | total: 2.022469 | size: 719422

dnslookup: 0.008388 | connect: 0.260589 | appconnect: 0.000000 | pretransfer: 0.260655 | starttransfer: 0.516559 | total: 2.003774 | size: 719531

dnslookup: 0.008388 | connect: 0.260589 | appconnect: 0.000000 | pretransfer: 0.260655 | starttransfer: 0.516559 | total: 2.003774 | size: 719531

Bare metal:

dnslookup:

0.002593 | connect: 0.254832 | appconnect: 0.000000 | pretransfer:

0.254903 | starttransfer: 0.514009 | total: 2.145449 | size: 773954

dnslookup: 0.002504 | connect: 0.255856 | appconnect: 0.000000 | pretransfer: 0.256012 | starttransfer: 0.514497 | total: 2.071955 | size: 718803

dnslookup: 0.002650 | connect: 0.255524 | appconnect: 0.000000 | pretransfer: 0.255754 | starttransfer: 0.515312 | total: 2.066484 | size: 719941

dnslookup: 0.002504 | connect: 0.255856 | appconnect: 0.000000 | pretransfer: 0.256012 | starttransfer: 0.514497 | total: 2.071955 | size: 718803

dnslookup: 0.002650 | connect: 0.255524 | appconnect: 0.000000 | pretransfer: 0.255754 | starttransfer: 0.515312 | total: 2.066484 | size: 719941

This is what the packet capture looked like - Left side is the VM and right side is the bare metal.

Now, why did this occur? and what is the crucial difference that accounts for this?

I turned to LWN - https://lwn.net/Articles/701165/

"Most algorithms will continue to ramp up until they experience a dropped packet; BBR, instead, watches the bandwidth measurement described above. In particular, it looks at the actual delivered bandwidth for the last three round-trip times to see if it changes. Once the bandwidth stops rising, BBR concludes that it has found the effective bandwidth of the connection and can stop ramping up; this has a good chance of happening well before packet loss would begin."So now all that was left was to establish that packet loss indeed caused this, which would close this case. I looked at the packet capture - there were retransmissions in both captures.

Impatient, I fired up a ping with 0.01 second interval. There it was, 9.8% packet loss, mocking at me. This also explained why I could not reproduce the issue within the Colo or from the other Colo. Both had zero packet loss.

Well, there it was. An almost anti-climactic end to the adventure.

brr FTW!

Comments

Post a Comment